200 OKĪ poster was able to make a patch that casts teh time as 64 bit for the build of wget and it no longer exhibits this error, but neither of us in that thread feel this is the 'right answer' I get Code: Select all certificate has expired instead of Code: Select all HTTP request sent, awaiting response. The clock is set and synced but if I issue a wget command:Ĭode: Select all wget -force-html -spider -connect-timeout=1 -timeout=10 -tries=2 Wget keeps saying the certificates are invalid on armv7 though they are totally fine. There seems to be a deeper library or gcc issue or something on RPi armv7 than the patch posted here. I posted this a couple other places around here on arch arm and ran around chasing strings for a couple weeks. Keith keithspg Posts: 220 Joined: Mon 4:14 pm To build it complete on RPiOS and have it pass all the tests, I had to install these packages on RPiOS:Ĭode: Select all sudo apt install debhelper pkg-config gettext texinfo libidn2-0-dev uuid-dev libpsl-dev libpcre3-dev automake libssl-dev dh-strip-nondeterminism I looked at the build requirements in the PKGBUILD and it all seems to be the same between Arch Arm and RPiOS.

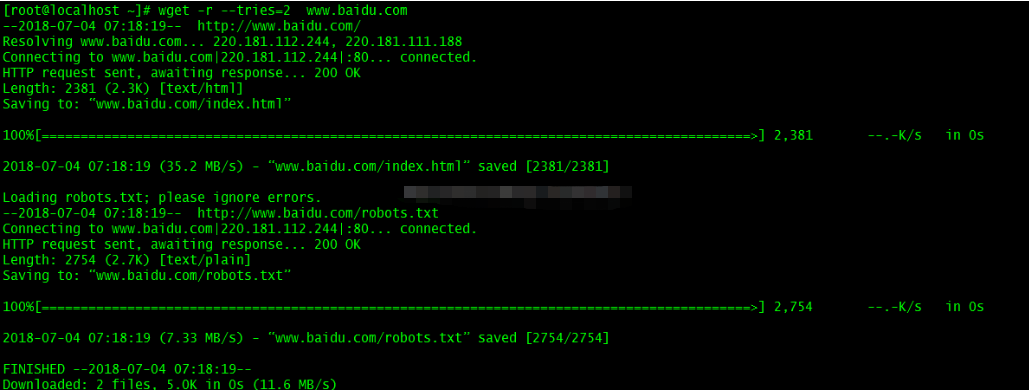

With the default Arch version of wget it says Code: Select all The certificate has expired Loaded CA certificate '/etc/ssl/certs/ca-certificates.crt' When I replace the binary on my running armv7 image from with the binary I built on RPiOS (/usr/bin/wget) and add a soft link to libpcre.so.3, ( Code: Select all ln -s /usr/lib/libpcre.so /usr/lib/libpcre.so.3) I am able to get the command I am running to complete:Ĭode: Select all # wget -force-html -spider -connect-timeout=1 -timeout=10 -tries=2 I just built it on RPiOS on armv7 and it builds fine and has no test errors. keithspg Posts: 220 Joined: Mon 4:14 Do you have any ideas why wget does not build properly from the PKGBUILD on any arch arm and specifically the armv7? Remote file exists and could contain further links,īut recursion is disabled - not $ uname -a Do not know what is wrong, but it does not seem to be right.Ĭode: Select all $ wget -force-html -spider -connect-timeout=1 -timeout=10 -tries=2 When I fire up a fresh RPi image, wget works as expected. Strange that the PKGBUILD does not build properly on any Arch ARM architecture (armv7 or aarch64). Note: I would set the sleep count at least to one second (if not more) in order to give the indexer enough time to finish his job and to avoid lock conflicts.Īnd yeah, it's intentional that I don't use the –spider switch of wget, because it only checks the header response instead of downloading the file, which could be possibly not enough to trigger the indexer.Well, I dug a bit deeper.

The reason I search the pages directory first instead of using the /data/index/page.idx file, is that there could be pages added from a script which in turn could be missing in the global index because of that. There are probably one million other ways to do this in bash. & echo "ERROR fetching $url" sleep 1 done

type f ) do file= $ file=$ ( basename $file '.txt' ) url= " $file" wget -nv " $url" -O /dev /null You have to run it inside of your /data/pages folder or it won't work.įor file in $ ( find.

Here's a short bash snippet which uses wget I want to share with you. For example if you need to rebuild the search index or you make use of the tag plugin and you don't want to visit each site on your own to trigger the (re)generation of the needed meta data 1). Some of you might have been in that situation already and know that sometimes it's necessary to spider your DokuWiki. This post was originally posted April, 20th 2009 at and is licensed under the Creative Commons BY-NC-SA License. With his permission I will repost a few of his old blog posts that I think should remain online for their valuable information. Luckily he provides a tarball of all his posts and used a liberal license for his contents. Michael Klier recently decided to shut down his blog.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed